Marketing pages for testing tools throw around large numbers: "Save 20+ hours monthly!" "Test 3 times faster!". If you are an engineering manager, evaluating these claims can be incredibly frustrating. How do you actually prove that a browser extension like a form filler yields concrete ROI for your team?

You don't need a month-long proof-of-concept. You just need a focused, seven-day trial scorecard.

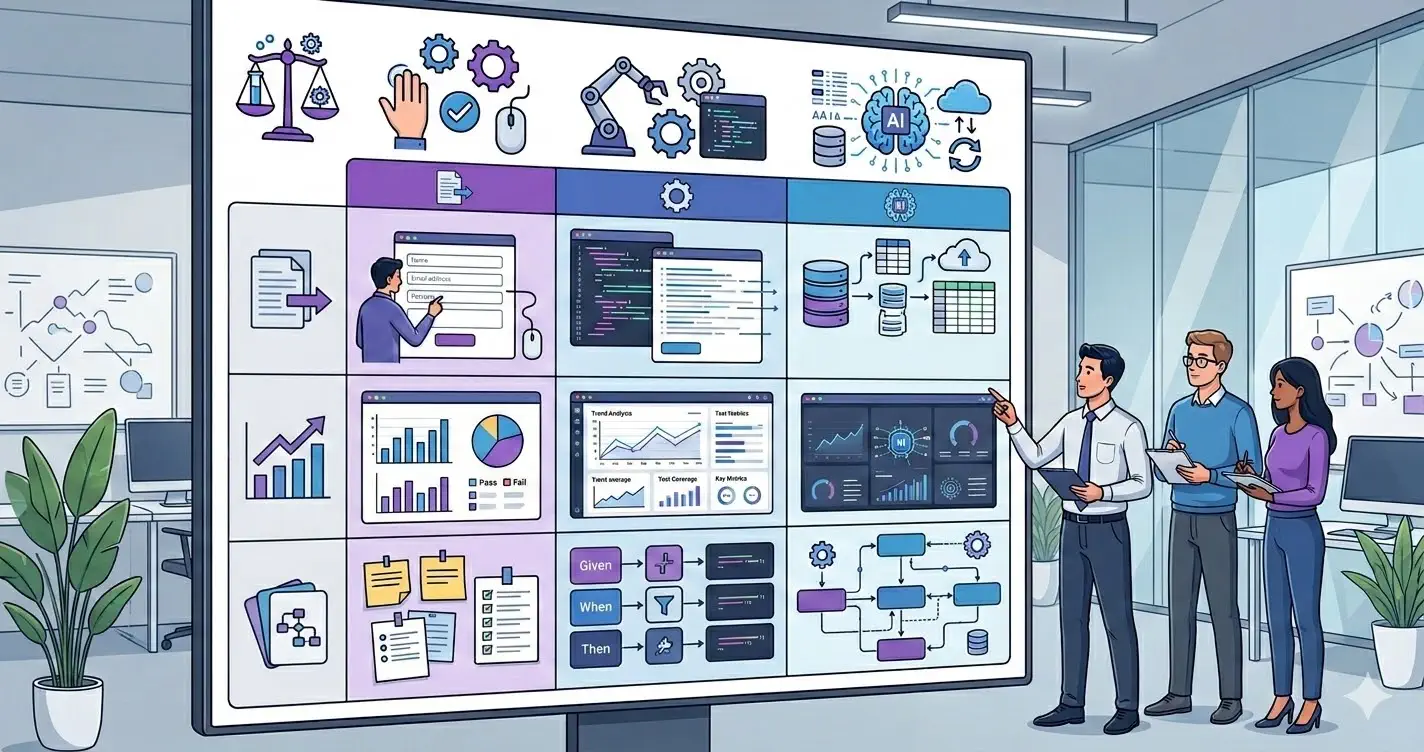

This rubric strips away feature checklists and focuses entirely on three operational realities: Time Savings, Signal Quality, and Coverage.

Step 1: Baseline the High-Friction Flows

Before installing anything new, identify the top two most complex, friction-heavy forms in your web app (e.g., a checkout page, or an enterprise onboarding flow).

Measure your baseline: Use a stopwatch and time exactly how long it takes a QA tester to fill those long forms out manually, bypassing validation roadblocks along the way. Record this number.

Step 2: Run the Trial Week

During the seven-day trial, have the team use the candidate tool (such as Mockfill or a rule-based alternative) exclusively for these flows.

Track the following metrics on your scorecard:

Measurement 1: Time Savings (Cycle Time Reduction)

The biggest gains tend to appear as cycle time reductions—specifically, rerunning the same flows after small UI iterations.

- Time to complete form: Record the time taken utilizing the autofill extension versus the baseline manual baseline.

- Time to reproduce bugs: Measure the latency between reading a Jira ticket report and having the form fully populated locally to investigate.

- Formula: (Baseline Time - Extension Time) × Reruns Per Day × Days Per Month. Does this number plausibly amount to hours saved?

Measurement 2: Bug Signal Quality

Tooling should reduce noise, not add it.

- Plausibility: Does the extension generate convincing data (e.g., realistic phone numbers, localized names), or does it spit out obvious placeholders (

asdf,test@test)? - Clarity in Repros: How many bug tickets this week included the exact values utilized to trigger the fault, instead of forcing the dev to guess? Realistic data produces high-quality bug reports.

Measurement 3: Behavioral Coverage

Bulk-filling triggers fundamentally different browser events and validation timing than slow typing.

- State Syncing: Did the autofill correctly update your underlying framework state (React, Vue, Angular) and not just the visual DOM?

- Edge Cases Found: Track how many unexpected truncation or validation issues surfaced strictly because the tool provided edge-case data (e.g., exceedingly long surnames or unusual characters).

The Decision Rubric

At the conclusion of the sprint, review the scorecard:

- If cycle times dropped significantly but complex edge cases failed: You are likely evaluating a rigid, rule-based tool that cannot handle modern frameworks or complex custom validations.

- If bug signal quality is poor (noisy tickets): The tool’s data generation is too weak. Ensure you migrate to an auto-detecting realistic filler.

- If time savings, signal quality, and state syncing pass smoothly: This tool is a strong workflow fit for your manual acceleration layer.

Keep in mind that while extensions provide incredible manual throughput, they are not a replacement for Cypress or Playwright CI assertions. Treat them precisely for what they are: a powerful multiplier for human iteration.